In the first part of this two-post series, I explained the core concepts behind multicloud networking. I also discussed necessary protocols, the relevant networking services offered by the three major public cloud providers, and how multicloud infrastructure works.

Now that you have a foundation of networking theory, I’ll show you how to put that knowledge into practice. In this post, I’ll walk you through designing a multicloud network utilizing AWS, GCP, and your on-premises environment.

ACME Corp Goes Multicloud

For the purpose of this demonstration, I’ll focus on the famous (and fictional) ACME Corporation and their cloud journey.

Let’s say ACME established its data center decades ago, but when AWS launched in 2006 they decided to join forces with Amazon, starting with popular AWS services like EC2, S3, and RDS. A couple of years later, they utilized AWS Site-to-Site VPN to establish a private cloud network and eliminate the need to use AWS services over the public internet.

Cloud engineers and technical management were happy with what AWS added to ACME Corp’s IT needs, but started to fear vendor lock-in. What if Amazon decided to stop offering hosting services to companies they deemed competition? If they did, ACME would have to scramble to find another provider.

ACME’s tech personnel wanted anyway to improve costs and try services that AWS doesn’t offer by working with multiple cloud providers.

Kubernetes with the managed GKE service was especially interesting to ACME. They reasoned that, since Google invented Kubernetes, GKE will offer them the most features out of the box, and would be the first among public cloud providers to introduce newer versions and major updates.

With this in mind, the decision was made to implement a multicloud network with Google Cloud Platform and to connect it to their existing AWS network and on-premises data center.

But where to start?

Designing a Blueprint for Multicloud Networking

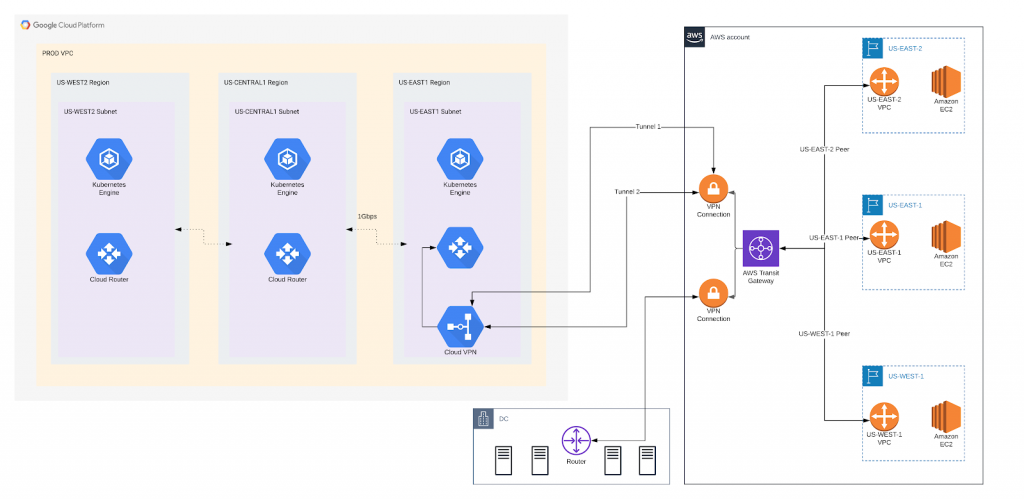

ACME Corp’s business focuses on the U.S. market; so they’ve organized their AWS presence in three regions: us-east-1, us-east-2, and us-west-2, with us-east-1 as their primary region. Their compute workloads are based on EC2 instances deployed across all three regions, with connectivity between regions maintained by VPC peering. VPN connection to the data center is established with redundant VPN tunnels terminating in the us-east-1 region.

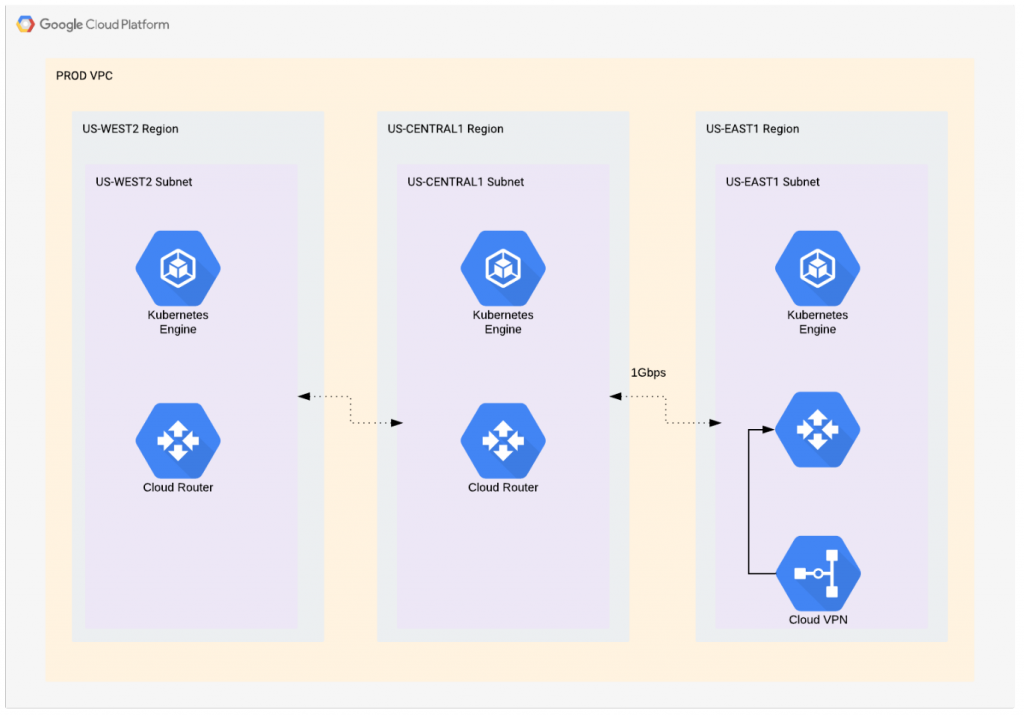

Naturally, they want a similar setup on GCP, including three regions, a K8s cluster in each, and VPN termination in the us-east1 GCP region. This should provide the least latency between their primary regions in both public clouds.

The diagram in Figure 1 makes the plan look simple. Deploy a GCP environment, peer GCP networks, set up VPN connection with two tunnels, connect to AWS, and voila! They’ve gone multicloud.

But in order to implement this simple plan, they must first make other changes based on Amazon and Google recommended best practices.

(Re)Configuring the AWS Side

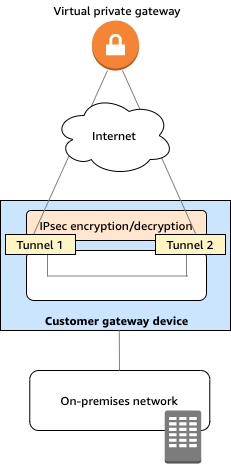

Before introducing another public cloud provider into its network topology, ACME Corp has to follow Amazon’s recommended best practice for establishing VPN connections: one VPN connection to the on-premises data center with two redundant VPN tunnels. For that setup, they’ll need VPN Gateway (to define the IPSec settings on the AWS side), and Customer Gateway (to define IP settings from their data center environment).

Figure 2: AWS Site-to-Site VPN connection (Source: aws.amazon.com)

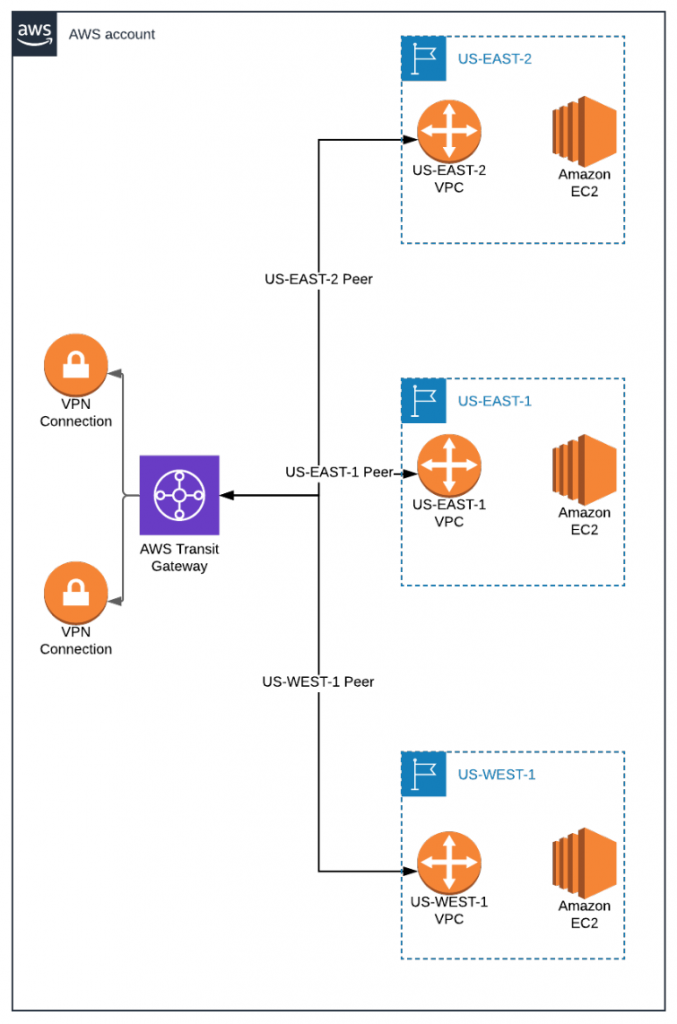

In order to maintain connectivity between all of its virtual private clouds in U.S. regions, ACME Corp has to establish multiple VPC peerings and maintain multiple route tables—even with BGP dynamic routing protocol.

The solution to this situation was released in 2018, with AWS Transit Gateway. This managed network service allows enterprises to connect thousands of VPCs and on-premises networks using a single gateway.

With the introduction of Transit Gateway on ACME Corp’s AWS networking side, they’ll follow the required configuration:

- Set up three Transit Gateway Attachments to connect all three VPCs to the Transit Gateway)

- Reconfigure the current VPN to on-premises to use Transit Gateway instead of VPN Gateway

- Set up new VPN connection to GCP on Transit Gateway

Instead of maintaining two Customer Gateways, two VPN Gateways, three VPC peerings, and multiple route tables (for each VPC and VPN), AWS makes it possible for ACME to consolidate all of their networking configurations on one Transit Gateway with one Transit Gateway Route Table.

Much to their relief.

Are you a tech blogger?

Configuring the GCP Side

ACME Corp wants to replicate their region placement on the GCP side: three regions distributed throughout the U.S. to minimize latency for customers, regardless of where they’re located in the country.

Since Google has central-U.S. cloud regions (compared to AWS’ East and West Coast regions), ACME has made the smart choice to deploy some workloads in the us-central1 GCP region.

Since ACME Corp is establishing a multicloud VPN in the us-east1 region, their Cloud VPN managed service needs to be deployed in the VPC tied to this region.

With this service, ACME can establish two redundant tunnels to AWS and propagate routing tables from VPCs on both ends by utilizing dynamic BGP routing between AWS and GCP. This means that they don’t need to set up any static routes. And if they’re utilizing BGP, all routes from the GPC side will be advertised to AWS and vice versa, eliminating the need to constantly maintain and verify static route tables.

If ACME’s on-premises physical routers (such as Cisco, Juniper, Palo Alto, Check Point, Fortinet, or others) support BGP routing, they can eliminate the need for static routes across their entire network. This will vastly improve their overall network performance and ease the day-to-day activities of their network specialists, cloud architects, and DevOps engineers.

Unfortunately, GCP doesn’t have an equivalent service to Amazon’s Transit Gateway. In order to connect their GCP VPCs and allow all GCP internal networks to reach AWS and on-premises data centers via VPN, ACME would need to utilize GCP VPC peering.

AWS-GCP Multicloud Networking Key Takeaways & Best Practices

Before I wrap up the second article in this two-part series, here are a couple of notes regarding the practical example of AWS-GCP multicloud networking we presented here:

- Avoid network ranges overlapping. You cannot have any duplicated segments, such as VPC or subnet CIDR, with the same address.

- Avoid overlapping for BGP ASNs if you use dynamic routing. You cannot have the same BGP ASN two times anywhere in your entire network.

- Create Transit Gateway attachments for each VPC and VPN connection. In the setup we presented, you would have five of them (three VPCs plus two VPN attachments).

- If you have multiple accounts on the AWS side, instead of one account with multiple VPCs, you’ll need to use AWS Resource Access Manager to share one Transit Gateway with multiple AWS accounts.

Conclusion

I’ve covered a lot of cloud networking terms and concepts in these two posts, as well as one extensive practical example on how to connect AWS and GCP with your on-premises network. Even so, if you’re designing such a network, you should know that this is just the tip of the iceberg.

Before embarking on your multicloud networking journey, arm yourself with as much networking documentation from public cloud providers as you can. Consult the internet for tutorials, Reddit posts, or even third-party mailing lists and discussion boards. If it’s an option and within your budget, sign up for professional support from your cloud vendor. It’s expensive, but can be a life saver in scenarios like the one I’ve covered here.

Remember that productional networks cannot tolerate outages, and if you already have sensitive workloads in your public cloud, and you want to connect it to a data center or some other public cloud provider, you simply cannot make mistakes, because they will cost you dearly.