AI is a hot topic at the moment, with one of Microsoft’s translation services achieving near-human accuracy translating from Chinese to English. Facial recognition, another expanding field, is forecast to grow by over 20% this year.

These are just two of the many examples of the uses of AI. At the rate AI innovation and implementation is growing, it can be hard to keep up with new developments.

Azure Cognitive Services has effectively democratized these and other forms of artificial intelligence for the technical and developer community. In this blog post, we’ll introduce a set of these services. Specifically, we’ll take a closer look at three of the Azure Vision Services’ APIs: the Computer Vision API, the Custom Vision API, and the Form Recognizer, to see how they can be used to produce actionable insights on image-related content such as identifying objects and people and in photographs.

What Is Azure Cognitive Services?

Azure Cognitive Services is a comprehensive family of AI services that helps you build intelligent software applications. No machine machine-learning expertise is required to use them; an API call alone can embed AI capabilities into your existing applications.

Azure Cognitive Services includes 30 APIs that belong to the following categories:

- Decision APIs—Help you to make decisions faster, detect anomalies, or moderate content

- Vision APIs—Assist you in identifying and analyzing content in images, videos, and digital ink

Speech APIs—Integrate speech processing into apps (e.g., speech to text)

Search APIs—Give you the power of BING in a box

Language APIs—Allow you to easily infuse natural language processing, text analytics, and technology into chatbot development

Azure Cognitive Services can be deployed anywhere in the cloud, and, if data privacy is a concern, containers can be used to give you total flexibility and control over API consumption.

How Are Azure Cognitive Services Consumed?

The consumption of Azure Cognitive Services has been standardized. It typically involves the following steps:

- Provision the Cognitive Service in the Microsoft Azure Portal.

- Take note of the API key.

- Build a REST request with the required parameters.

- Invoke the request and parse the API response.

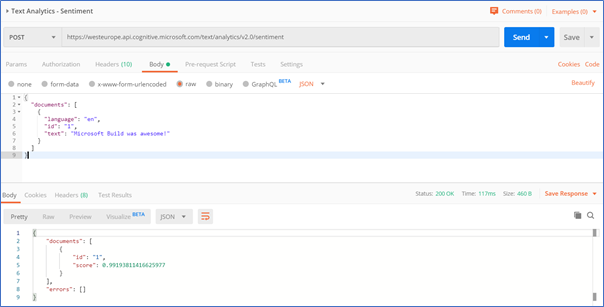

It’s that easy. In the screenshot below, an API call is being made to the Text Analytics API using Postman:

The text being analyzed here is the phrase, “Microsoft Build was awesome!” In the bottom half of the screenshot, you can see the underlying emotional sentiment represented as a number. The closer to 1.0 the number is, the more positive the text is. In this case, Text Analytics has determined that the phrase was very positive.

Historically, implementing this kind of functionality would have involved substantial development effort, since you can’t simply keyword match or regex your way through this. You’d have to turn to something like the Bayesian Theorem to build a classifier, build a data model to support the classifier, and then source and cleanse training data. With the power of the Text Analytics API, however, you don’t need to do any of this.

Computer Vision API

First up is the Computer Vision API. It ships pre-trained with a collection of models that deploy computer vision-related capabilities into your existing software application. Computer Vision makes it easy for developers to process and label visual content. At the time of writing, this API could identify more than 10,000 objects and had support for 25 languages.

Key Features

Some other key features of the API include the ability to:

- Detect the type of image—e.g., clipart or jpg

- Determine the image width and height

- Detect adult content and assign an “adult score”

- Automatically assign an image category, e.g., “transport”

This API can describe objects in images, detect the existence of human beings, and even auto-generate human-readable tags, enabling you to process visual content very efficiently.

Use Cases

An ideal use case for this API is the processing of social media data. This API can help you to identify the most popular types of images being shared online and, in the process, optimize your marketing campaigns.

While the Instagram Graph API lets you extract key numbers from its platform, it can’t determine the type of content that is being posted. With this API, you can, for example, find out if images that contain smiling faces receive a higher volume of comments, likes, shares, and impressions than images of kittens. (I wrote an in-depth miniseries about this topic.)

This API can also be used for enhanced reporting, assisting you in deriving insights from your corporate data stores.

Custom Vision API

The Custom Vision API picks up where the Computer Vision API left off. Occasionally, the Computer Vision API will incorrectly classify your image, perhaps because it’s an edge case unique to your business or an unusual image that the API can’t identify.

Features

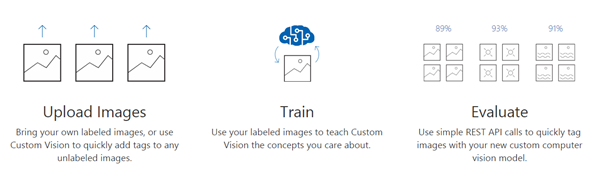

With this API, you supply your own training data in the form of a collection of images that best represent the ones you expect to be processing. After you “label” the training data, a REST API endpoint is produced. This endpoint can be easily integrated with your existing software application, letting you perform custom image classification and object detection.

Use Cases

The main use cases for this API are processing images that are unusual or are unique to your business and processing images in situations where you need full control over the labels being applied.

Form Recognizer API

The Form Recognizer API is built on machine learning and lets you identify key/value pairs in table data that you might find in form documents such as receipts or invoices. One of the coolest features of this API is that it only requires about five examples of the form or training data to be properly trained.

Form Recognizer takes in your form data, ingests the text, and quickly outputs structured data that is present in the form. Like the other APIs we’ve looked at, it’s available over a series of REST API endpoints, making it easy for developers to integrate it into existing software applications. It’s like an OCR tool on steroids!

Features

At the time of writing, the main features that ship with Form Recognizer are:

- Custom models—Extracts key/value pairs from your existing training data

- Receipts—Extracts data from American-style receipts using an out of the box training model

- Layout—Extracts the coordinates of text and tables from a document

Use Cases

Given the amount of information and form data existing on the web, there are many use cases for this API. For example, people in the insurance industry often find themselves processing torrents of instance documents. This API can be used to extract key data and fields from their paperwork. In HR, you can use the API to automate expense receipts processing and automatically push receipts into your backend system. Health care professionals needing to process patient records from disparate systems can train this API to identify key values in medical records.

These are just some of the many ways this API can be used. It’s unique, in that it allows you to supply your own training data for your business and implement fully automated and customized documentation recognition systems with a limited set of training data. It’s certainly an API worth checking out.

Summary

In this blog post, we’ve shown how developers can tap into the power of AI and machine learning through the use of Azure Cognitive Services—all with little to no prior machine learning experience.

Azure Cognitive Services is empowering developers around the world by making it easy to add AI capabilities to existing software applications. In the coming years, we’ll see the mainstream adoption of services like these, since they help human beings augment their existing skill sets for processing data at scale, detecting anomalies, and making predictions.