With the rise of new computing platforms, such as the internet and the cloud, combined with a proliferation of new devices and form factors, distributed computing has become so ubiquitous that we barely register its existence. But as more of us come to rely on distributed computing systems, either as the main focus of our work or as the thing that makes it possible to work remotely, we can start to see its benefits, as well as various issues—such as when traditional ways of debugging and monitoring software no longer work.

In this article, I’ll describe why the software industry is transitioning away from monolithic applications toward distributed computing and microservices. We’ll examine the problems involved in this move and explain why existing monitoring solutions no longer work; plus, I’ll illustrate how distributed tracing solves these problems and how to get started.

Software Monitoring Uncertainty Principle

Typically, a distributed computing environment decomposes a monolithic application into several much smaller microservices. In other words, instead of creating one giant app that does everything at a specific location, you create many smaller services designed to handle specific tasks, such as authentication, data access, or calculations.

Instead of relying on a single system, with backups in one or two locations, a distributed system includes multiple copies of each service running in multiple locations. This results in a system that is highly scalable, redundant, and performant.

As with many great ideas, the problems arise when people start using it to do real work.

In a distributed application, a user initiates a task, which sends a request to a specific endpoint, and the system then passes messages between the relevant services. This gives you the computing equivalent of Heisenberg’s uncertainty principle, which, simply put, states that you can either observe the momentum of a subatomic particle with uncertainty or you can locate its actual position; you can’t do both.

Similarly, in a distributed application, you either monitor the messages passing through your system or the machines that host them. In addition, you also need to decide whether you monitor the individual subsystems or the entire system. When things go wrong, do you try to find the specific source of the problem, or do you deal with it at the highest level of abstraction?

IOD is a content creation agency specializing in high quality tech content for tech experts. The IOD Blog publishes weekly content geared toward solving problems, providing tips & tricks, and comparing tech solutions.

Creating Observability with Distributed Tracing

If distributing is like quantum physics, monolithic computing follows a more classical approach. Just like Newtonian physics, you can precisely measure large objects and precisely track them. In a monolithic application, all of your software is in one place, and since you have access to the source code, you can see how a request is passed across individual functions. At the system level, you know on which machine the app is running as well as a relatively finite number of related resources, such as networking and storage. So, if something goes wrong, you can usually find the root cause through a process of elimination.

The key to monitoring a distributed, microservices-based architecture is observability.

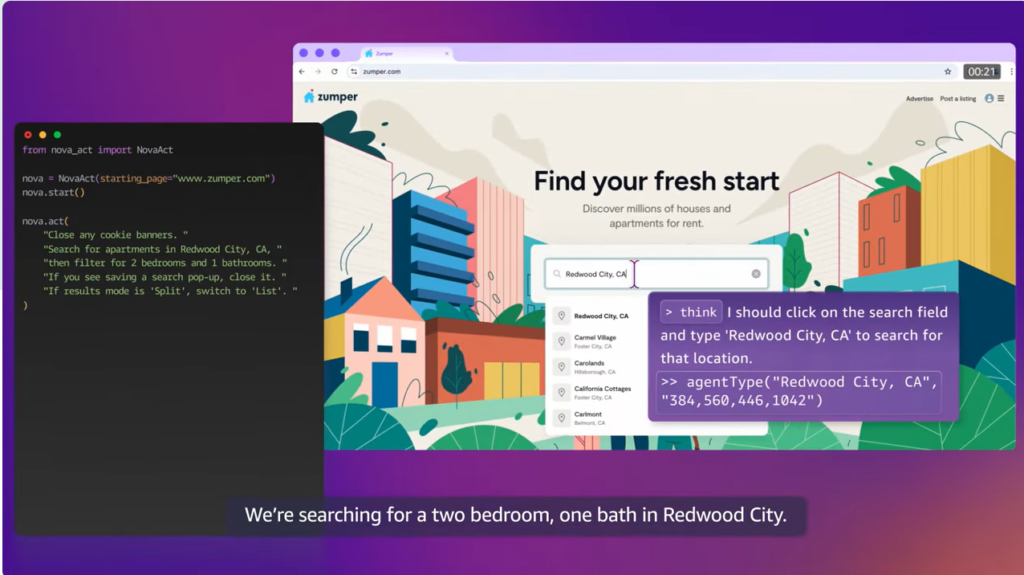

Observability is an engineering principle that lets you derive a system’s internal state by observing its outputs. For distributed platforms, observability can be achieved through distributed tracing, a holistic monitoring approach that traces an individual request along the path of the request from its origin to its destination. These traces are persisted and assigned a unique ID, with each trace broken down into spans that record each step within the request’s path. A span indicates the entities with which the trace interacts, and, like the parent trace, each span is assigned a unique ID and time stamp and can include additional data and metadata as well.

This information is useful for debugging because it gives you the exact time and location a problem occurred. As we all know, locating the source of a problem is extremely frustrating and always takes longer than anticipated.

From Theory to Practice

The foundation of any distributed system is being able to integrate your existing software with a tracing platform. You create the integration layer by making API calls to a distributed tracing platform. The good news is that you have three open source tracing frameworks available: OpenCensus, OpenTracing, and OpenTelemetry. The bad news is that now you need to pick one.

OpenCensus

OpenCensus is the open source version of Google’s internal tracing libraries, called Census. OpenCensus can be viewed as a one-stop shop for application monitoring and metrics and is designed to provide context propagation and collect traces and metrics. Context propagation matches requests with the services they consume; it also enables developers to trace requests across a system.

Context propagation enables OpenCensus, collects distributed traces, and captures the relevant time-service metrics that measure performance and can indicate specific issues. The core of OpenCensus is a standard library that is available for most popular high-level languages. These libraries can be downloaded as packages, and the source code is hosted on GitHub. Since the framework’s initial release, it has gained wide support across the software industry, including from Microsoft, VMware, and Comcast.

OpenTracing

OpenTracing tackles distributed tracing very differently from OpenCensus. One of the downsides of the OpenCensus approach is that if your specific language or use cases are not supported, you’ll need to manage without it, extend the existing code, or create your own solution. Instead of giving everything you need, it defines an open standard and lets different organizations and companies implement it.

The advantage of OpenTracing is that it can provide a wider choice of potential solutions. Furthermore, this approach has received the blessing of the Cloud Native Computing Foundation as its chosen tracing standard. The disadvantage, however, is that someone still needs to do the work, so you are at the mercy of specific developers and third parties. As with similar standardization initiatives, the results have varied widely in consistency and quality.

OpenTelemetry

OpenTelemetry aims to combine the different approaches of OpenCensus and OpenTracing by merging the two projects. As a result, it provides a standard that defines how tracing tools should be designed and implemented and also gives you the APIs, libraries, and tools you need to get the job done. As with most mergers, the time between the initial announcement and when something actually happened was considerable; almost a year passed from the original announcement in May 2018 to the release of the official beta.

This release includes a range of development kits for languages, such as JavaScript and Python, and integrations with APIs and frameworks, such as gRPC. It also includes a collection of tools and a full range of documentation. According to the release announcement, these tools are ready for integration, but developers should proceed with caution, since they have not been fully tested and could undergo radical changes in the coming months.

Conclusion and Recommendations

Distributed computing has great promise and has already provided great benefits. As with any revolution, it seems to solve major problems while simultaneously creating a number of new ones. In the case of distributed tracing, it provides solutions for monitoring distributed applications and makes it easier to migrate from monolithic software to distributed applications that leverage a microservice architecture and cloud services. Once you’ve made this transition, distributed tracing can help you not only see what’s happening, but also find the source of system-wide and local issues and fix them.

Again, in theory, this approach is great; but the devil is in the details, specifically in the implementation. Two years before the creation of OpenTelemetry, I would have recommended OpenCensus over OpenTracing, as it is the more mature of the two platforms. Its approach may be more constrained, but it gives you 90% to 95% of what you need. A year ago, I would have recommended deciding which existing platform best meets your needs, rather than choosing a new and untried option.

While these recommendations are still relevant, OpenTelemetry seems to be forging ahead, while work has stopped on its predecessors. Despite the aforementioned risks associated with the adoption of a new and relatively immature platform, OpenTelemetry seems to be the only option going forward. This presents a dilemma similar to the choice Apple developers had to make between the devil they knew, Objective C, and the new and shiny Swift. In most cases, a few brave individuals took the plunge and got cut on the bleeding edge, while everyone else took a wait-and-see attitude.

Unless you have a massive tracing problem that you need to fix right now, it’s probably best to hang on a bit and see how things shake out.